Cache Mapping Techniques

Contents

show

What is cache memory mapping?

How mapping is done in cache memory?

Today in this cache mapping techniques based tutorial for Gate we will learn about different type of cache memory mapping techniques. These techniques are used to fetch the information from main memory to cache memory.

There are three type of mapping techniques used in cache memory. Let us see them one by one. Three types of mapping procedures used for cache memory are as follows –

What is cache memory mapping?

Cache memory mapping is a method of loading the data of main memory into cache memory. In more technical sense content of main memory is brought into cache memory which is referenced by the cpu. This can be done in three ways –

(i) Associative mapping Technique

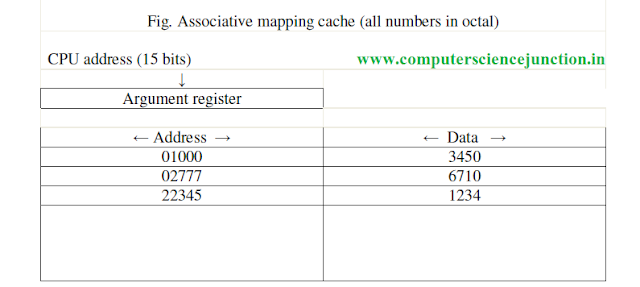

The fastest and most flexible computer used associative memory in computer organization. The associative memory has both the address and memory word. In associative mapping technique any memory word from main memory can be store at any location in cache memory.

The address value of 15 bits is shown as a five-digit octal number and its corresponding 12 bit word is shown as a four-digit octal number and its corresponding 12-bit word is shown as a four-digit octal number. CPU generated 15 bits address is placed in the argument register and the associative memory is searched for a matching address.

If the address is present then corresponding 12-bit data is read from it and sent to the CPU. But If no match occurs for that address, in that case required word is accessed from the main memory , after that this address-data pair is sent to the associative cache memory.

Suppose that cache is full then question arises that where to store this address-data pair. In this condition this concept of replacement algorithms comes into existence.

Replacement algorithm determines that which existing data in cache is remove from cache and make a space free so that required data can be placed in cache.

Replacement algorithm determines that which existing data in cache is remove from cache and make a space free so that required data can be placed in cache.

A simple procedure is to replace cells of the cache is round-robin order whenever a new word is requested from main memory. This constitutes a first-in first-out (FIFO) replacement policy.

(ii) Direct mapping

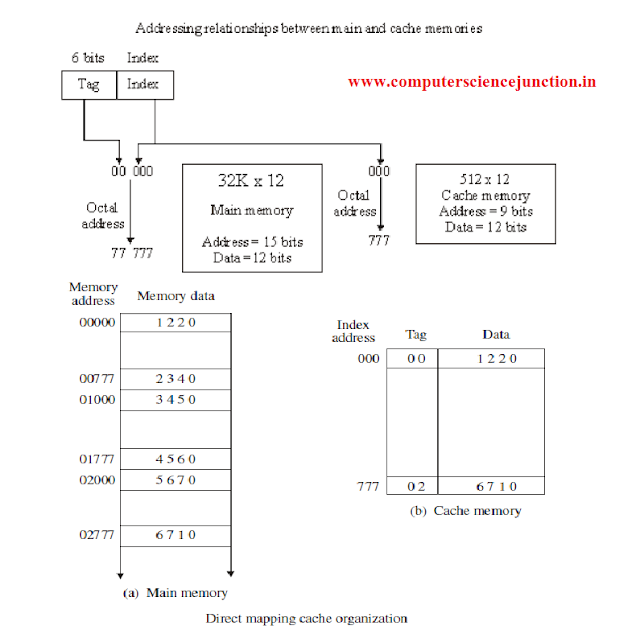

Associative memories are more costly as compared to random-access memories because of logic is added in with each cell. The 15 bits address generated by the cpu is divided into two fields.

The nine lower bits represents the index field and the remaining six bits form the tag field. The figure shows that main memory required an address that includes tag and the index bits.

The index field bits represent the number of address bits required to fetch the cache memory.

Consider a case where there are 2k words in cache memory and 2n words in main memory. The n bit memory address is divided into two fields: k bits for the index field and the n-k bits for the tag field. The direct mapping cache organization uses the n-k bits for the tag field.

- In this direct mapped cache tutorial it is also explained the direct mapping technique in cache organization uses the n bit address to access the main memory and the k-bit index to access the cache. The internal arrangement of the words in the cache memory is as shown in figure.

- It has been shown in cache that each word in cache consists of the data and tag associated with it.When a new word is loaded into the cache, then its tag bits are also stored alongside with the data bits. When the CPU generates a memory request, the index field is used for the address to access that cache.

- The tag field of the address referred by the cpu are compared with the tag in the word read from the cache. If these two tags match it means that there is a hit and the desired data word is available in the cache.

- If these two tags does not match then there is a miss and the required word is not present in cache and it is read from main memory. It is then stored in the cache memory along with the new tag.

- The disadvantage of direct mapping technique is that the hit ratio can drop considerably if two or more words whose addresses have the same index but different tags are accessed repeatedly.

To see how the direct-mapping organization operates, consider the numerical example as shown. The word at address zero is presently stored in the cache with its index = 000, tag = 00, data = 1220. Assume that the CPU now wants to read the word at address 02000. Since the index address is 000, so it is used to read the cache and two tags are then compared.

Here we found that the cache tag is 00 but the address tag is 02 these two tags do not match, iss occurs. So the main memory is accessed and the data word 5670 is sent to the CPU. The cache word at index address 000 is then replaced with a tag of 02 and data of 5670.

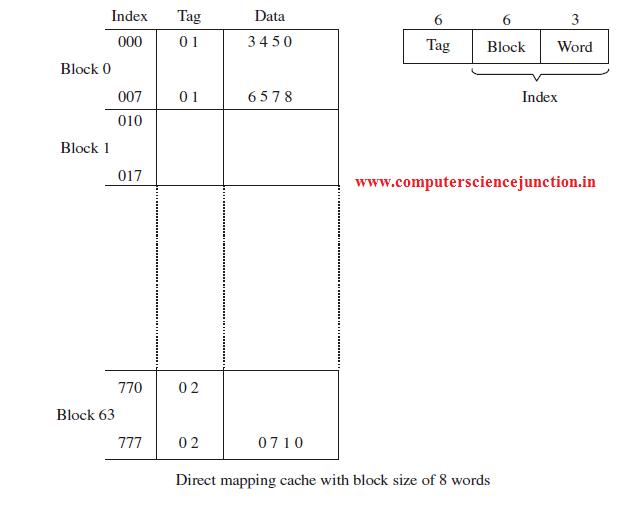

First part of the index filed is the block field and second is the word field. In a 512-word cache there are 64 blocks and size of each block is 8 words. Since there are 64 blocks in cache so 6 bits are used to identify a block within 64 blocks. So 6 bits are used to represent the block field and size of each block is 8 words so 3 bits are used to identify a word among these 8 words.

(iii)Set associative mapping

Disadvantage of direct mapping techniques is that it required a lot of comparisons.

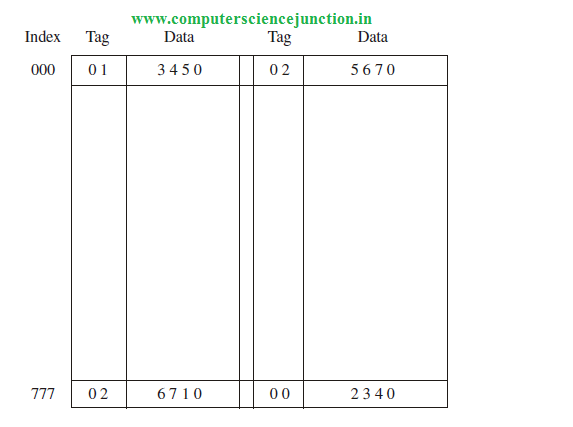

A third type of cache organization, called set associative mapping, is an improvement over the direct-mapping organization in that in set associative mapping technique. In this technique each data word is stored together with its tag and the number of tag data items in one word of cache is said to form a set.

Here I have explained the concept of set associative memory with the help of an example. An example of a set associative cache is shown in figure. Each index address has two parts data words and their associated tags.

6 bits are required to represent the tag field and 12 bits are required to represent the word field. Word length is36 bits. Here an index 9 bits index address can have 512 words. So cache memory size is 512 x 36.

It can accommodate 1024 words of main memory since each word of cache contains two data words. In general, a set-associative cache of set size k will accommodate k words of main memory in each word of cache.

When the CPU generates a logical address to fetch a word from main memory then the index value of the address is used to access the cache. The tag field of the CPU generated address is then compared with both tags in the cache to determine weather they match or not.

The comparison logic is performed by an associative search of the tags in the set similar to an associative memory search so it is named as “set-associative.”

We can improve the cache performance of cache memory if we can improve the hit ratio and the hit ratio can be improve by improving the set size increases because more words with the same index but different tags can reside in cache.

When a miss occurs in a set-associative cache and the set if full, it is necessary to replace one of the tag-data items with a new value using cache replacement algorithms.

I hope this Computer Science Study Material for Gate will be beneficial for gate aspirants.

Also read : Different cache levels and Cache Performance

Also read : Different cache levels and Cache Performance